Voice Assistants

We have forever had to learn the language of technology, be it keyboard, mouse or touchscreen. Voice Assistants have turned this around. With voice, technology has to learn to understand us, understand our language.

Our understanding of voice assistants or voice-based products is typically limited to Siri, Alexa or Google Assistant style general-purpose Assistants. The reality is there's a bigger picture out there that many of us are not familiar with. In this blog, we will present you with a more holistic view of Voice Assistants.

What are Voice Assistants?

Voice Assistants have become synonymous with Google Home and Amazon Echo. This perception cannot be more incorrect. The underlying technology powering both these smart speakers are the actual voice assistants, Google Assistant and Amazon Alexa, respectively.

Today voice assistants are not limited just to smart speakers but are also available in cars, household devices, smartphones, and several apps.

Voice Assistants are a subset of Virtual Assistants or Intelligent Personal Assistants. These virtual assistants can take inputs in many different ways:

- Text - Such Intelligent virtual assistants called chatbots are text-based assistants.

- Voice:- These types of assistants are called Voice Assistants.

- Image:- These assistants take an image as an input, e.g. Google Lens, Bixby Vision

We will leave the chatbots and Image-based assistant to other experts. Let's get back to Voice assistants.

A Voice Assistant is a virtual assistant that uses speech recognition, natural language processing and speech synthesis to take actions to help its users.

Essential Conditions for being a Voice Assistant:

Every voice solution is not a voice assistant, but every voice assistant is a voice solution. To be called Voice Assistants, a voice solution needs to match these conditions:

- Voice as input - Primary mode of input for a Voice Assistant should be through Voice.

- Conversational - Voice Assistants should be able to have natural and contextual two-way communication with the user.

- Confirmational:- Voice assistants should be able to confirm, clarify and answer the user with context.

History of Voice Assistants:

Voice assistant integration has taken two different routes - one is on the smartphone, and the other is on smart speakers (and other connected devices). Apple, Google and Amazon have been focused on making their Voice Assistants ubiquitous through general-purpose assistants.

Apple has taken the route of getting Siri on all Apple devices. They even launched the Homepod, a smart speaker without much traction.

Google is uniquely positioned to catapult the adoption of Google Assistant by making it available almost mandatory across all android smartphones and finding success in the smart speaker market with the Google Home lineup.

Amazon had an early mover advantage in the world of smart speakers where they were almost two years ahead of Google and found success with Alexa in Echo devices.

The latter two have quickly moved to offer voice assistants across various third-party devices in different form factors to appeal to different user preferences and contexts.

We don't want to bore you with all the history stuff. We could have written a bunch of paragraphs going into details of what happened when, but it's best if we give you a quick graphical snapshot with this fantastic timeline created by our friends at voicebot.ai.

The voice assistant journey can be broken down into two phases::

Phase 1 was all about getting consumers introduced to the idea of using voice to perform tasks.

Phase 2 is about voice becoming a pervasive interaction mode with more capabilities which is used more frequently on more devices, in apps, and in different contexts.

How do Voice Assistants work?

Do you ever wonder how a single command like 'Alexa, how is the weather outside ?' is interpreted by your smart speaker. Don't worry. We will break it down for you to understand how this magic happens. We have abstracted out the nuances of this works and simplified it to help you understand:

- Automatic Speech Recognition (ASR)

- Natural Language Processing (NLP)

- Desired business logic via hooks

- Text To Speech (TTS)

Voice Assistants gaining popularity

A report by Activate Forecast in 2018 mentioned how smart speakers have been adopted faster than smartphones in the United States of America. It is perhaps the quickest adoption of any new technology in the modern world.

This shows the Paradox of Intelligent assistants i.e Poor Usability and High Adoption.

In recent times however trends are changing, Amazon saw a surprisingly big 51.7% year-over-year dip in US shipments of Alexa-powered smart speakers in 2021!

It is believed that the drop might be tied to the pandemic. The assumption is that smart speaker adoption rose in 202 due to the pandemic as large scores of the American population studied and worked from home due to the lockdowns.

And over the years although smart speaker ownership has increased the rate at which this is happening has been decreasing. In 2019 20% of US respondents had a smart speaker, 36% owned one in 2020, and in 2021 the number increased to 44%. Adoption rates as we can see have slowly decreased.

This drop has been the highest in Q4 2021, when Amazon Alexa smart speakers saw shipments fall 78.9% year over year to 4 million, despite the holiday shopping season.

Here is another useful data point: According to Voicebot.ai, There are twice as many monthly active voice assistant users on smartphones as smart speakers, and voice usage in cars also exceeds the use of smart speakers.

Usage of Voice in the Developing world

Smart speakers are yet to take off in the developing world, they might be all the rage in the West, but Voice Assistants on smartphones is still the king here. In India, masses cannot afford a stand-alone Echo dot or a Google Home, but they can use the same voice assistant through their existing smartphone.

According to the this estimate by eMarketer, a quarter of India's population will use smartphones. Here are a few points from the report published in 2018.

- There were 291.6 million smartphone users in India by the end of 2017.

- The number of smartphone users in India is estimated to hit 337 million by the end of 2018.

- The number of smartphone users in India would reach 490.9 million by 2022.

Jumping to 2022, It is now believed according to Deloitte’s 2022 Global TMT study that India will have 1 billion smartphone users by 2026 with rural areas driving the sale of internet-enabled phones.

As of 2021 India had a total of 1.2 billion mobile subscribers out of which about 750 million were smartphone users.

For context, India can be divided into two parts

1) Metros or India-1

2) Non-Metros or India-2

Voice Assistants will help the 'Next Billion Users' or as we like to call them NBUs coming online for the first time to use apps. These NBUs are primarily present in India 2 and are catching up with metros (India 1) when it comes to internet usage. The three critical pillars enabling this transition are voice, vernacular, and video.

Google's year in search report highlights the importance of targeting these NBUs. Advancements in speech recognition have enabled a better understanding of Vernacular Indian languages. Leveraging Voice will help NBU who are not familiar with a smartphone to interact with the app intuitively. 70% of the users are bypassing laptops and desktops and directly using smartphones.

During the 2019 cricket season, Google Assistant received over 100 million cricket queries as people asked for news and live score updates. This means people of all ages and geographies can engage with voice-enabled technology more easily because it mimics normal conversation

(Data from Google Insights and the Voice Playbook).

India has a vast potential to be the biggest market for voice search with an expected YoY growth of 270%, as revealed by Google.

Vernacular language speakers will account for roughly 75% of India's internet user base by 2021. Research by KPMG and Google points out that by 2021, nine out of ten new internet users in the country will be native language speakers, and Google search trends show a significant move in this direction as well. This study presents an exciting opportunity that can be tapped by voice search to create disruption, especially in a country like India, where there are multiple regional languages, and people prefer them over English for day-to-day communications.

According to MMA and Isobar report, globally over 500 million people use Google Assistant every month already, with Hindi second only to English as the most commonly used language.

Based on a report by Recogn, Dentsu Aegis Network (DAN), India's owned agency's market research division; the market is expected to experience a YoY(Year-Over-Year) growth of 40.47% from Rs. 149.95 Cr in 2019 to Rs.210.63 by the end of 2020. The report also states that 76% of the users are familiar with speech and voice recognition technology.

According to Juniper Research, this number will only grow by three times over the coming years. Interestingly, the firm also states there will be around 8 billion digital voice assistants in use by 2023, which is a significant increase from 2.5 billion assistants in use at the end of 2018.

Types of Voice Assistants

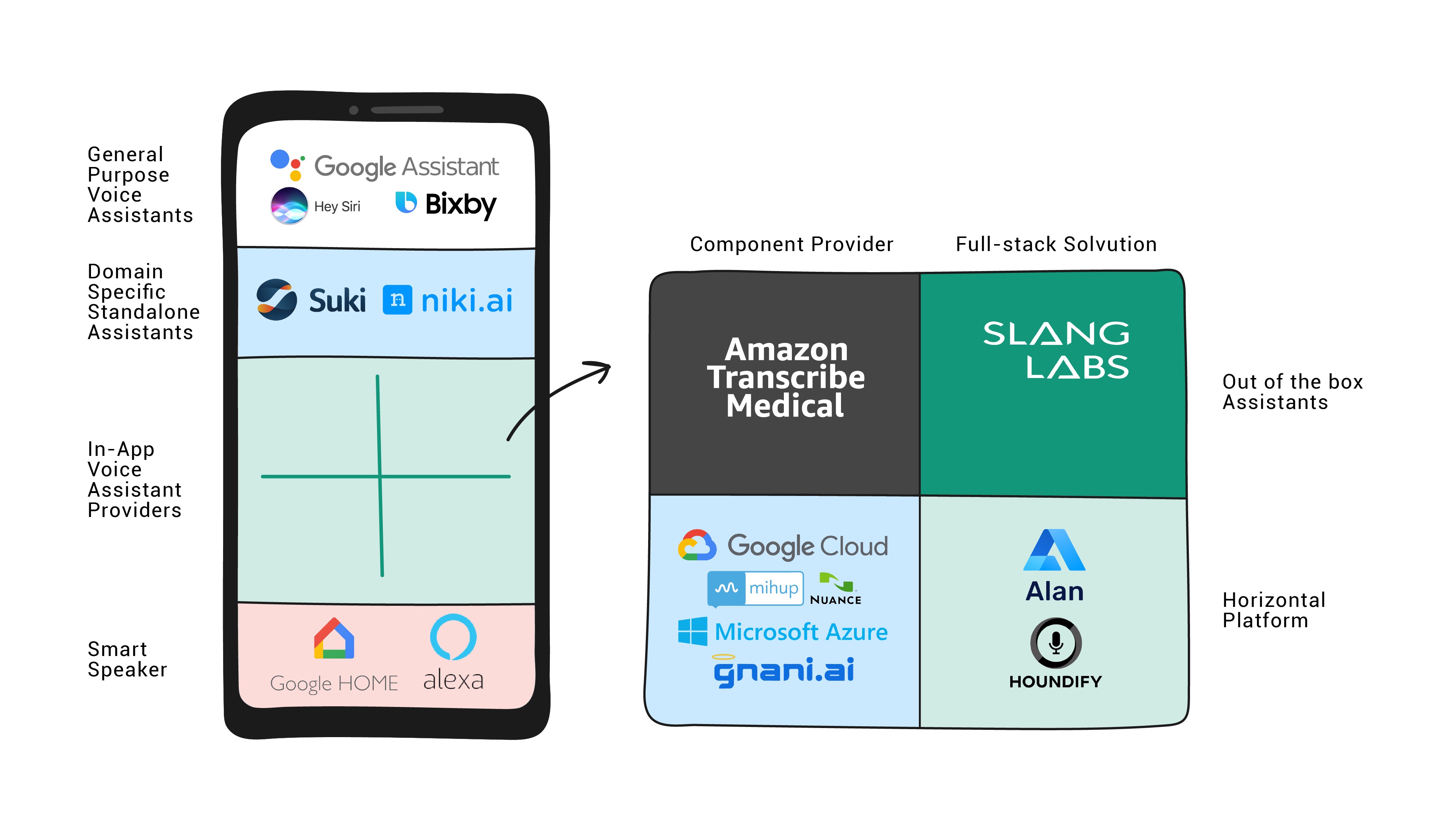

If we try to understand the type of VA's available, there are broadly three categories into which we can divide them.:

General Purpose Voice Assistants

Google Assistant, Siri, Bixby in Android, iPhone and Samsung devices, respectively, are great examples of General purpose Voice Assistant present on smartphones, smart speakers and other smart devices.

All of these Voice assistants help you with general things like setting the alarm, scheduling events, making calls, launching apps, amongst others.

Google Assistant also has a feature called App Actions which can trigger actions inside specific apps using deep links. Recently, Alexa announced the same with Alexa on apps.

Unfortunately, Siri doesn’t have any such feature currently and has poor performance compared to both Alexa and Google Assistant.

Increasingly, Google Assistant and Alexa are shifting strategy and focusing on doing tasks inside apps encroaching on the niche carved by in-app Voice Assistants.

In-app Voice Assistants

Witnessing the popularity of Voice Assistants, some brands have started adding Voice Assistant to the primary mode of communication - their apps and websites. Trainman, Flipkart,Amazon, Bank of America have added in-app voice assistants, and yuyiii.com has added a Voice Assistant to their website.

These voice assistants are present in an app to ease or elevate the customer experience.

With in-app voice assistants gaining popularity, we decided to do an in-depth analysis of some of them which are present in the most popular apps in India - Udaan, MyJio, Amazon, YouTube, Trainman, Gaana and JioMart.

Owned

These in-app Voice Assistants are built in house by businesses mostly using the service provided by component providers like Nuance, Google Cloud and others.

Some of the companies which have launched their in-app voice assistants are Bank of America with Erica, Capital One with Eno, Flipkart and YouTube.

In Dec 2019, Erica had more than 10 million users and was on track to complete 100 million client requests. In 2020, It added 1 million users per month from March to May.

Managed and White Labelled

Many small and large players have started adding white-labelled or managed in-app Voice Assistants to their apps like Trainman and Big Basket.

They can use full-stack solutions like Slang Labs, which provides in-app voice assistants for specific domains like eCommerce and travel. The speed of execution and well thought out conversation designs makes this option attractive for businesses.

Some businesses also add custom-built assistants on top of platforms like Haptik, Houndify, Mycroft AI or Alan to their apps.

Stand Alone Voice Assistants

These voice assistants don't sit inside an app, but they are the primary communication channel with the users in a stand-alone app. They are self-sufficient voice assistants usually built for limited use cases and specific domains.

Some examples of such assistants are Suki and Niki.ai. Suki is a stand-alone voice assistant in an app built explicitly for doctors. Suki used component providers like Amazon Transcribe Medical to create this Voice Assistant.

Smart Speakers and Smart Devices

First Party Smart Devices

These devices are sold by the companies which make these voice assistants in most cases - Amazon and Google. They have Google Assistant and Alexa built-in.

Nest Home devices by Google and Echo devices by Amazon are some of the First party products. Some of these devices are cheaply available and retail for as low as $25.

Amazon has recently launched wearable echo devices such as Echo Ring and Echo Eyeglasses. Google and Amazon have also launched wireless earbuds with Assistant and Alexa built-in, respectively, kicking off the race of smart wearables.

Second Party Smart Devices - Devices with built-in Voice Assistants

The key difference between 2P and 1P devices is that 2P devices are manufactured by different OEMs and have integrated general-purpose voice assistants like Alexa and Google Assistant.

Examples of such devices are Smart TVs, Smart Watches, Smart Refrigerators. Some companies like Sonos, Harman Kardon, Bose even have smart speakers with both Alexa and Google Assistant built-in

Third Party Smart Devices - Support for Voice Assistants

These third-party devices don't have a voice assistant built into them but can be controlled by Voice Assistants. They are popularly called smart devices and are connected to the internet. Some of these IoT devices can be controlled using Voice Assistants.

Markets are flooded with such devices in the last few years - smart bulbs, refrigerators, toilets, faucets, microwaves, dryers, air conditioners and more.

According to IDC, the premier global market intelligence firm, India is the fastest-growing large market globally. India's smart home devices market grew 78% YOY in 2019.

A large part of this growth has come from third-party smart devices, especially smart lighting devices, which have seen a nearly 248% increase from 2018. It's also important to note that smart speaker shipment has grown by 53%. About 12 million smart home devices were shipped in India in 2019.

One more interesting trend from the Indian markets is around hearables. Almost all hearables support Google Assistant and Alexa. India is the 4th largest market of hearables, and this category grew 444% in 2019.

Domain Specific v/s Domain Agnostic Voice Assistants

Domain Agnostic Voice Assistant

Tech giants like Google, Amazon, and Apple have created their general-purpose assistants, also known as domain agnostic voice assistants, with the help of large amounts of available data. These types of assistants work across domains to do generic tasks.

Domain Specific Voice Assistant

As the name suggests, Domain-specific voice assistants are specific to a particular domain, e.g., eCommerce, Travel, Healthcare or Hospitality.

They are either purpose-built for these domains and optimised for them. These voice assistants have much higher accuracy and support use cases relevant to the domain leading to a better user experience. This is possible due to the boundaries of information that come with a particular domain.

Challenges faced by Voice Assistants

Yes, voice technology has problems. Call them challenges or call them opportunities that one can tap into it, pun intended!

Privacy

Privacy is a huge concern, especially when it comes to smart speakers; they always listen for their wake word, posing a huge privacy concern.

The crucial detail that is often missed out on is the difference between listening and recording. Once these speakers or voice assistants get activated using the wake word, they start recording the audio.

These audio clips are sent to Google or Amazon. These tech giants have exposed these audio recordings to humans, albeit in an anonymised manner yet, a significant infringement on privacy. There have already been numerous cases where such recordings have led to privacy issues.

Accuracy

Voice Assistants don't always understand what's spoken. There could be many reasons behind these- sometimes it could be because of how we say, our accent can cause that. Sometimes, it could be because the voice assistant simply doesn't know what to do with your question. After all, it doesn't have any instructions related to your query.

Lack of vernacular Support

Speech recognition, perhaps the most critical component of a Voice Assistant, is not available for a lot of languages spoken around the world. The problem is not only limited to speech recognition but also extends to other critical functional areas of Voice Assistants.

Countries like India, with a massive Indic speaking population and lack of quality ASR model for vernacular languages, are often a limiting factor in providing a good voice experience. In India, voice assistants will not be a source of convenience but are a necessity.

To create innovations in the field of voice assistants and conversational AI in India - we need a Bharat Bhasha stack, similar to the Bharat stack, which enables a myriad of use cases in the fintech space.

Most of the Natural Language Processing is being done after translating spoken utterances from vernacular languages to English. In this process, a lot of contextual nuances are lost or are changed.

Future of Voice Assistants

Advancements in Artificial Intelligence and Machine Learning are truly revolutionizing how we use voice assistants in our daily lives.

With voice now establishing itself as an ultimate mobile experience, businesses are only beginning to understand how they can integrate voice in all their activities. A recent report by PwC reveals that the adoption of voice assistant technology is highest among 18-24-year-olds. But the group that uses voice assistants most frequently is the 25-49-year-old group.

The coming time presents many opportunities for voice to grow by leaps and bounds, but a lack of skills and knowledge makes it difficult for businesses to get on board with a voice strategy. If one is in it for the long run, the voice will present an opportunity to understand and provide experiences to your consumers like never before. The question is, is your brand willing to jump on this opportunity?

.gif)